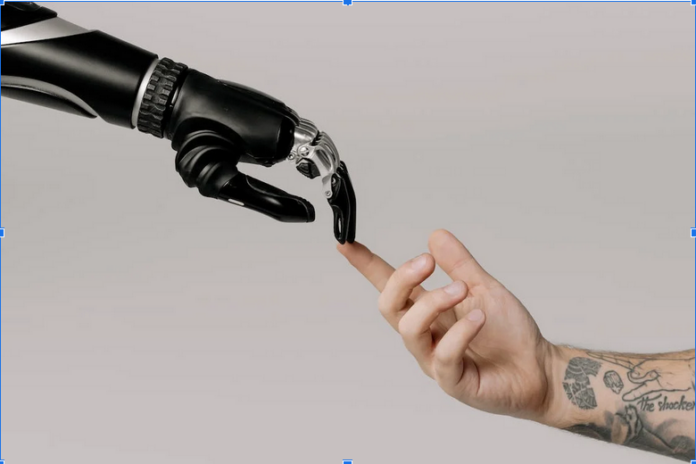

As artificial intelligence (AI) increasingly becomes an integral part of the financial services industry, there is a growing need to address the ethical considerations that come with its implementation. While AI has the potential to revolutionize finance by driving increased efficiency, cutting costs and enhancing the customer experience, it also raises questions about bias, privacy, transparency, and accountability. In this article, we explore the role of AI in finance, the benefits and challenges of its adoption, and the ethical considerations that must be taken into account to ensure its responsible use.

Understanding the Role of AI in Finance

The Rise of AI in Financial Services

In recent years, the adoption of AI in the financial services industry has grown significantly. AI-powered systems are being used to power chatbots, customer service, and underwriting for loans and insurance. They are also being used to manage investment portfolios, detect fraud, and analyze market data in real-time. The market for AI in finance is projected to grow rapidly in the coming years, with experts forecasting a total addressable market of $1,345 billion by 2030.

Key Applications of AI in Finance

The applications of AI in finance are numerous. One of the most popular applications is the use of chatbots and virtual assistants to provide customer service. Chatbots can handle simple customer queries and complaints, freeing human staff members to deal with more complex issues.

AI can also be used for credit scoring, where algorithms use machine learning to assess a borrower’s creditworthiness by analyzing data from various sources, such as social media and transaction data. This can help banks and other financial institutions to underwrite loans more accurately and efficiently.

Another application of AI in finance is fraud detection. Fraudsters are smart and sophisticated, and they are always looking for new ways to exploit vulnerabilities in systems. AI-powered fraud detection systems can analyze large volumes of data in real-time and identify unusual patterns that could indicate fraud.

Benefits and Challenges of AI Adoption

The adoption of AI in finance has many benefits, including the potential to increase efficiency, reduce costs, and increase profitability. AI-powered algorithms can analyze large volumes of data, identifying patterns and trends that can be used to improve decision-making and gain a competitive edge.

However, the adoption of AI in finance is not without its challenges. One of the biggest challenges is the potential for bias in AI algorithms. If the data used to train an AI system is biased, the system will also be biased. This could result in unfair outcomes for certain groups of people, perpetuating existing societal inequalities.

Another challenge is the potential for AI to be used for nefarious purposes, such as fraud or money laundering. AI-powered systems can be trained to mimic human behavior, making it harder to detect fraudulent activity.

Ethical Considerations in AI Implementation

In the ever-evolving landscape of finance, the integration of artificial intelligence (AI) has revolutionized trading practices. Among the most cutting-edge advancements is the emergence of Immediate Connect, a fusion of quantum computing and AI algorithms. This groundbreaking technology harnesses the power of quantum mechanics to analyze vast amounts of data and make complex financial predictions with unprecedented accuracy.

While Immediate Connect offers the potential for substantial gains and market insights, it also raises ethical considerations that demand careful attention. Striking a delicate balance between innovation and responsibility is crucial as financial institutions navigate the decision to adopt Immediate Connect’s methods.

Bias and Discrimination in AI Algorithms

One of the most significant ethical considerations in AI implementation is ensuring that algorithms are unbiased. Bias can manifest in many ways; for example, data sets may be skewed towards certain groups, or algorithms may be designed to favor certain outcomes. To prevent bias from creeping into AI systems, companies need to design and implement algorithms that are transparent and explainable.

One way to prevent bias is to ensure diversity in the teams that design and develop AI systems. Research has shown that diverse teams are less likely to produce biased results than homogenous teams.

Data Privacy and Security Concerns

With increased reliance on AI systems comes increased concern about the security and privacy of data. AI systems require large amounts of data to function effectively, and this data may contain personal information that needs to be protected. Companies should ensure that their AI systems are built with privacy in mind and that they comply with all relevant data protection regulations.

Transparency and Explainability in AI Decision-Making

Another ethical consideration in AI implementation is the transparency and explainability of AI decision-making. Transparency is crucial to building trust in AI systems, particularly when it comes to making decisions that have a significant impact on people’s lives. Companies should strive to develop AI systems that are transparent and explainable so that users can understand how the system arrived at its decision.

Balancing Innovation and Responsibility

Developing Ethical AI Frameworks

To ensure that AI is used in a responsible and ethical way, companies need to develop frameworks that promote ethical decision-making. These frameworks should include guidelines for the development and use of AI systems, as well as procedures for monitoring and auditing systems to ensure that they comply with ethical standards.

Ensuring Fairness and Inclusivity in AI Systems

To ensure that AI does not exacerbate existing societal inequalities, companies need to ensure that their AI systems are fair and inclusive. This means designing AI systems that do not discriminate against certain groups of people and that are accessible to individuals with disabilities and those who speak different languages.

Promoting Collaboration between Stakeholders

Finally, to ensure that AI is developed and used in a responsible and ethical way, companies need to promote collaboration between stakeholders. This includes working with other companies, regulators, and civil society organizations to develop best practices, share information, and build trust in AI systems.

Regulatory Landscape and Industry Standards

Current Regulations Governing AI in Finance

Currently, there is no comprehensive regulation governing the use of AI in finance. However, some countries, including the US and the EU, have issued guidelines and recommendations for the ethical use of AI in finance. In the US, for example, the Financial Stability Oversight Council has identified the need for regulators to understand the risks associated with AI and develop appropriate supervisory frameworks.

The Role of International Organizations and Industry Bodies

As the use of AI in finance becomes more widespread, industry bodies and international organizations are taking a more active role in shaping the conversation around AI ethics. For example, the IEEE Global Initiative on Ethics of Autonomous and Intelligent Systems has developed a set of ethical guidelines for AI, with a focus on transparency, responsibility, and human oversight.

The Future of AI Regulation in Finance

As AI continues to transform the financial services industry, regulation will become more critical. Industry bodies and regulators will need to work together to develop a comprehensive regulatory framework that balances innovation and responsibility. In the future, it is likely that we will see more stringent regulation of AI in finance, with a greater focus on transparency, accountability, and fairness.

Conclusion

The adoption of AI in finance provides immense opportunities for the financial services industry to drive increased efficiency, streamline processes and enhance customer experience. However, it also raises significant ethical considerations that must be taken into account to ensure its responsible use. Companies need to be proactive in developing ethical frameworks, ensuring transparency and privacy, and promoting collaboration between stakeholders to ensure the development and use of AI in finance benefits all.